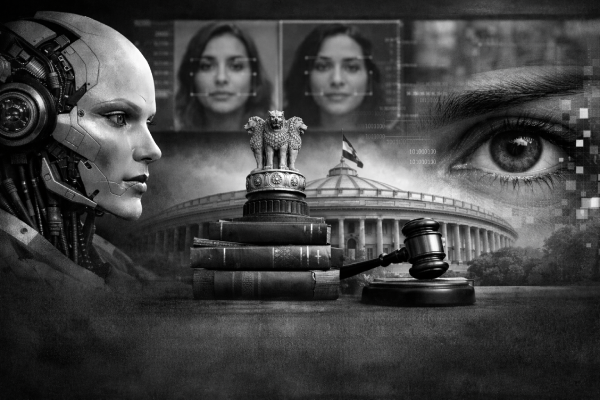

Regulating Artificial Intelligence Content and Deepfakes Under India’s Draft Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Amendment Rules, 2025

Prateek Sisodia

Founding Partner

Published Date

22 December 2025

Read Time

15 minutes

Introduction

On October 22, 2025, the Ministry of Electronics and Information Technology (“MeitY”) introduced the Draft Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Amendment Rules, 2025 (“Draft IT Amendment Rules 2025”) / (“Rules”), amending the existing IT (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021. These proposed amendments represent a strong regulatory step to tackle the growing challenges posed by generative artificial intelligence (“AI”) and the spread of synthetic or deepfake content. The move stems from the alarming ability of generative AI to produce highly realistic yet fabricated content in the form of videos, images, and audio that can falsely portray individuals saying or doing things they never did. Such technology has increasingly been misused to spread misinformation, invade privacy, tarnish reputations, manipulate public opinion during elections, and even commit fraud. The Draft IT Amendment Rules 2025 are MeitY’s response to rising public concern and ongoing discussions in Parliament about the urgent need to regulate AI-driven misinformation and deepfakes.

Background and Rationale for the Amendments

The surge in generative AI capabilities has created unprecedented challenges for content authenticity and individual rights protection. Recent high-profile incidents involving deepfake videos of celebrities, politicians, and public figures have demonstrated how synthetic media can be weaponized to spread misinformation, manipulate elections, damage reputations, and facilitate financial fraud.

Concerns raised in both Houses of Parliament regarding deepfakes and synthetic content prompted the Government of India to strengthen the due diligence obligations of intermediaries, particularly Social Media Intermediaries (“SMIs”) and Significant Social Media Intermediaries (“SSMIs”). Judicial intervention also played a key role in prompting this regulatory action. The Delhi High Court’s order in Sadhguru Jagadish Vasudev & Anr. v. Igor Isakov & Ors.1 highlighted the risks posed by synthetic content in spreading misinformation and impersonation, underscoring the need for regulatory clarity and intermediary accountability.

The Deep-Fake Challenge in India and Personality Rights

The rise of deepfakes a highly realistic, AI-generated audio, video, and images is creating a complex web of threats within Indian society. This synthetic media is already being used in troubling ways, both globally and in India, such as:

1.

Produce non-consensual intimate or obscene imagery, particularly targeting women;

2.

Mislead the public with fabricated political or news content, threatening electoral integrity;

3.

Commit fraud or impersonation for financial gain;

4.

Undermine trust in legitimate information

Although India lacks specific legislation exclusively addressing deepfakes, existing provisions under the Indian Penal Code (“IPC”), Bharatiya Nyaya Sanhita (“BNS”), and Information Technology Act, 2000 (“IT Act”) provide legal recourse.

The Draft IT Amendment Rules 2025 do not create a separate law for personality rights but focus on preventing the misuse of a person’s image or voice. India still lacks a specific statute recognizing publicity or personality rights; instead, courts have developed protection in this area by drawing from the principles of privacy, dignity, trademark, and copyright law.

In recent years, courts have strongly defended celebrities from having their digital personas exploited. Prominent figures from bollywood and the music industry, including Amitabh Bachchan, Aishwarya Rai Bachchan, Asha Bhosle and Arijit Singh, have successfully secured court orders to stop AI platforms from using their likeness or voice without permission.

Recognizing the scale of the problem, the Draft IT Amendment Rules 2025 aim to directly address this misuse. The danger is clear as deepfake technology can be weaponized to spread misinformation, damage reputations, influence elections, and commit fraud, making robust legal response more urgent than ever.

Key Provisions of the draft IT Amendment Rules 2025

1. Definition of Synthetically Generated Information

The cornerstone of the amendments is the introduction of a comprehensive definition of “synthetically generated information” under Rule 2(1)(wa). The term encompasses “information which is artificially or algorithmically created, generated, modified or altered using a computer resource, in a manner that such information reasonably appears to be authentic or true”. This broad definition intentionally captures deepfakes, AI-generated videos, morphed images, synthetic audio, and any algorithmically manipulated content that could deceive users.

The Draft IT Amendment Rules 2025 further clarify through a new sub-rule 2(1A) that any reference to “information” in the context of unlawful acts under Rule 3(1)(b), Rule 3(1)(d), Rule 4(2), and Rule 4(4) shall include synthetically generated information. This clarification ensures that AI- generated content is no longer in a legal grey zone and that intermediaries are accountable for its circulation.

2. Mandatory Labelling and Metadata Requirements

Under the new Rule 3(3), intermediaries offering computer resources that enable the creation or modification of synthetically generated information must implement rigorous transparency measures:

(a)

Permanent Identification: Every piece of synthetic content must be labelled or embedded with a permanent unique metadata or identifier that cannot be removed, modified, or suppressed.

(b)

Visibility Standards: Labels must be visibly displayed or made audible in a prominent manner, covering at least 10% (ten percent) of the surface area of visual content or occupying the initial 10% (ten percent) of audio content duration. This ensures that AI disclosures are immediately apparent and cannot be tampered with.

(c)

Immediate Identification: The labelling system must enable users to immediately identify content as synthetically generated.

Intermediaries are expressly prohibited from enabling the modification, suppression, or removal of these labels or identifiers, ensuring content traceability throughout its lifecycle.

3. Enhanced Obligations for Significant Social Media Intermediaries

The Draft IT Amendment Rules 2025 impose additional due diligence requirements on SSMIs, which enables displaying, uploading, or publishing any information on its computer resource shall, prior to such display, uploading, or publication). Under the new Rule 4(1A), SSMIs must:

(a)

User Declaration: Prior to uploading or publishing content, SSMIs must require users to declare whether the information is synthetically generated.

(b)

Technical Verification: Deploy reasonable and appropriate technical measures, including automated tools or other suitable mechanisms, to verify the accuracy of user declarations, considering the nature, format, and source of the content.

(c)

Prominent Labelling: Where declarations or technical verification confirm content is synthetically generated, SSMIs must ensure it is clearly and prominently displayed with an appropriate label or notice

The Draft IT Amendment Rules 2025 clarify that if an SSMI knowingly permits, promotes, or fails to act upon synthetically generated information in contravention of these rules, it shall be deemed to have failed in exercising due diligence. These obligations apply only to content displayed or published through platforms, not to private or unpublished material.

4. Safe Harbour Protection for Intermediaries

A crucial proviso added to Rule 3(1)(b) provides statutory protection to intermediaries that remove or disable access to synthetically generated information based on reasonable efforts or user grievances. This protection ensures that such actions do not affect the exemption provided under Section 79(2) of the IT Act, which grants safe harbour immunity to intermediaries acting in good faith.

Section 79 of the IT Act provides conditional immunity to intermediaries from liability for third- party content, provided they act as neutral hosts without knowledge of illegal content and exercise due diligence. The amendment strengthens this framework by explicitly protecting intermediaries that proactively address synthetic content issues.

Expected Impact

The Draft IT Amendment Rules 2025 aim to make the online space safer and more transparent by clearly defining the accountability of intermediaries and significant social media platforms that host or share AI-generated or deepfake content. It seek to ensure that all publicly available AI-generated media carries visible labels and traceable metadata, making it easier to identify such content. At the same time, they protect intermediaries acting in good faith under Section 79(2) of the IT Act while requiring them to address user complaints related to deepfakes or synthetic material. For SSMIs, users will have to declare whether the content they upload is synthetically generated, and these platforms must verify such declarations through reasonable technical means and add appropriate labels where necessary. Overall, these measures are designed to help users distinguish authentic information from synthetic content, build public trust, and promote India’s vision of an open, safe, trusted, and accountable internet that still respects freedom of expression and encourages innovation.